Gelsight Tactile Sensor

Visiting Research Student

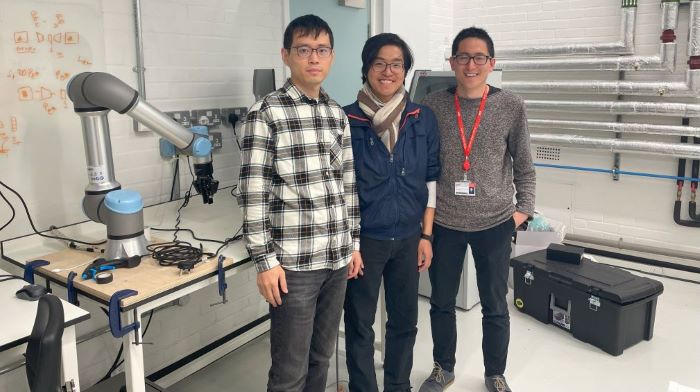

This research project was my Overseas Finaly Year Project to King’s College London (KCL) in the Robot Perception Lab

and my subsequent internship in the Robot Manipulation Lab at A*STAR I2R, transferring the Gelsigh fabrication techniques from KCL

Project Description

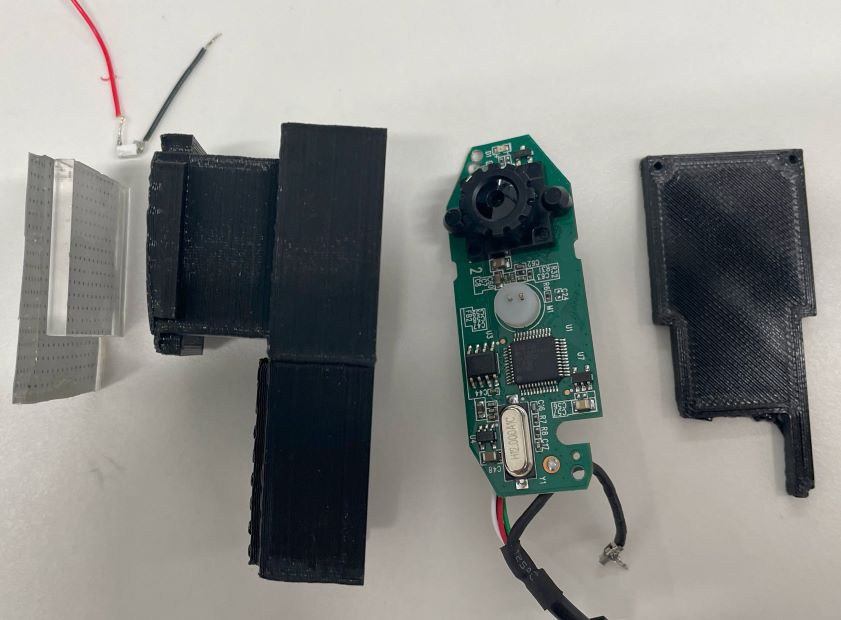

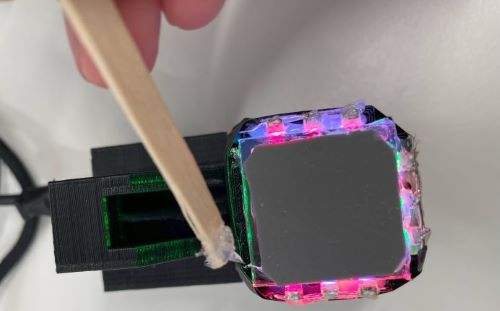

Gelsight is a high-resolution tactile sensor, utilizing a camera recording the movement of the silicone layer that is interacting with an object. My project focused on the fabrication of the Gelsight sensor, trying various computer vision processing algorithm to track the marker embedded in the silicone and use this signal for robot manipulation.

There is a good Youtube video on Gelsight. In our use case, we focus on the relative motion of the object and the sensor for robotic manipulation. Hence, we introduce the marker for ease of motion tracking.

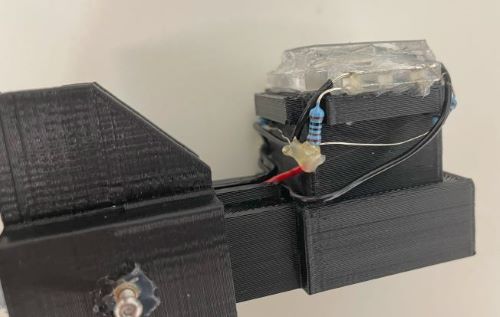

Fabrication

| Sensor breakdown | Sensor mounted |

|  |

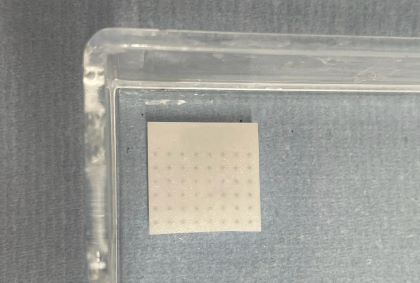

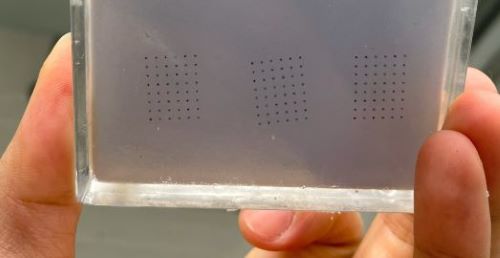

| 1. Cast the base silicone | 2. Apply markers |

| |

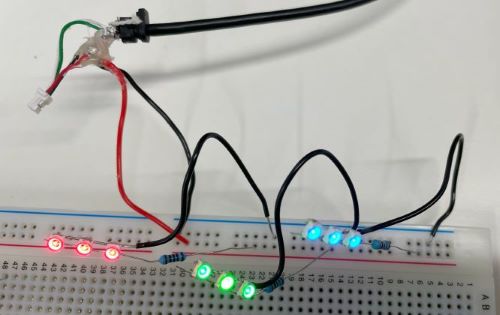

| 3. Apply the opaque silicone | 4. Install LED lightings |

|  |

| 5. Install casing , route the cables | 6. Secure the silicone layer |

|  |

Processing (Github)

Tracking of the marker embedded in the silicone layer

Check out the Github Repository for the starter code for image processing with Gelsight sensor

Reinforcement Learning (RL)

I explored the various RL algorithms utilizing the marker flow input. However, since the existing Gelsight simulations are not well-developed, I experimented using physical robot, which was very tedious and unfortunately yield no noticeable results. Nevertheless, I gained extensive understanding of reinforcement learning and the robotics research workflow through this research experience!

Surprise!

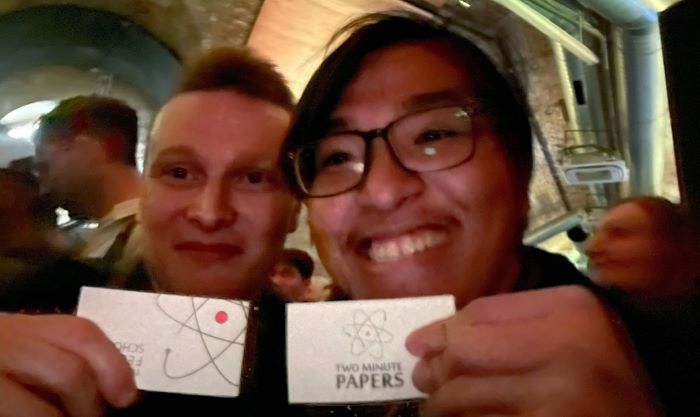

I also went to a Machine Learning meetup in London and happened to meet Dr Károly Zsolnai-Fehér from Two Minute Paper, a channel reporting on the latest development of Machine Learning and Computer Graphics.

” What a time to be alive!”

Comments powered by Disqus.